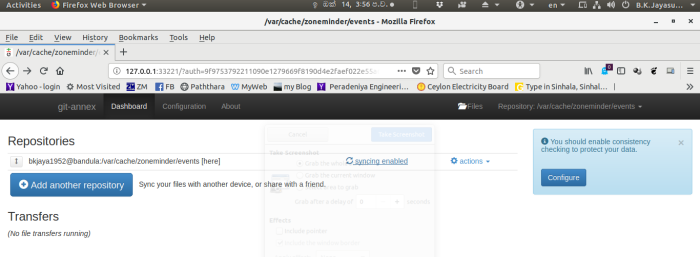

As far as I understood (from following the official guide, the following should work: cd /path/to/new/folder/to/extract/the/backup in case my local machine would break down). Then I'm able to use my bup special remote as I would use an additional repository.īut now I'd like to access my bup repo without using the first, local repo (e.g. Git annex initremote mybupbackuprepo type=bup encryption=none buprepo=/path/to/my/special/remote/location Git commit -m 'this works on my local repository' My workflow is as follows: cd /path/to/my/local/folder the bup special remote or the rsync special remote or, as soon as it lands on Debian Stable, the borg special remote). What I'd be interested in is using just one git annex repository on my local machine and additionally special remotes (e.g. This works well as long as I have at least one "real" git-annex repository (not a special remote). Afterwards I had to make sure that my keychain was available git-annex for a specific period of time.I'm using git-annex in version 7.20190129 as it is provided on my Debian Stable (Buster) machine to keep big files under version control and have them distributed over multiple machines and drives. Generating a GPG key was the easiest step. create a special remote (see below) which offers encryption.In order to be able to encrypt my stuff before pushing into the cloud, I had to One the important constraints before pushing my backups into the cloud was security. You’ll have to work with git-annex to do that. That means: Newly added data (but not committed to the repo) can be deleted by the client which added the data. you can add files/folders and delete them only if these weren’t added to the git-annex repo yet.One can access the data using ownCloud but you there are some restrictions:

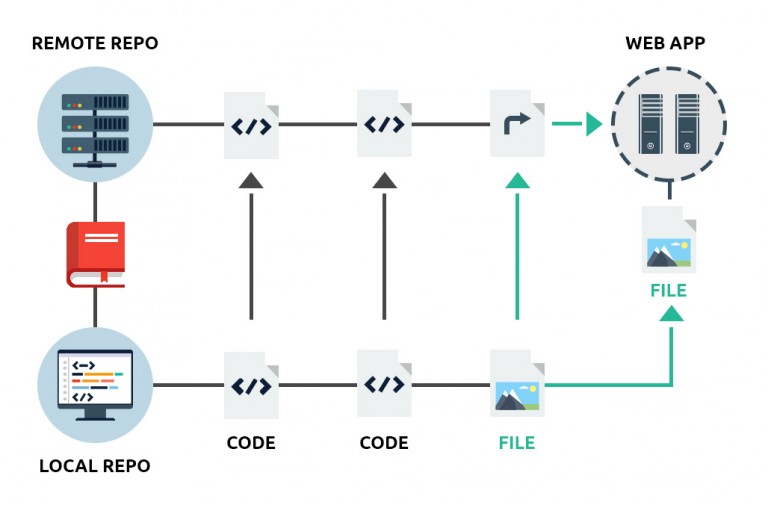

The data itself is then managed by git-annex which basically acts as a back-end. OwnCloud will act as a front-end and can be used by any ownCloud client. It will then only contain the git information (symlinks) but no data (annexed data). From the server I could then replicate the repo+data stuff to some cloud provider like AWS, DropBox or whatever.Īdditionally one could push the git repo to GitHub in an encrypted form - without the data itself. Data is being encrypted using my private GPG key. Afterwards the encrypted repo and the data itself is being pushed to some external server. Usually I push stuff to the HDD using rsync from my laptop or ownCloud using my mobile clients. There is one centralized repo on my raspberry pi where my HDD is attached to. While this might sound like a pretty easy task, it does have some peculiarities to be taken into consideration. Well this post is where the link between git-annex and ownCloud should be emphasized: Use ownCloud as your “frontend” tool for accessing the data while letting git-annex do the “backend” (aka backup) job.

In connection with a VPN (I prefer openvpn) solution you can have a secure way of remotely accessing your data from everywhere. Meanwhile I’ve heard of ownCloud and I’ve liked it because one can access its data via web, mobile client or whatever. I’ve been using git-annex for some years but not like a pro user, rather than “it just works”. Besides that you don’t want to have your backups at a single place, thus mitigating the impact of a single point of data loss.

Taking care of the consistency of your backups is even more complicated task. Backuping a whole bunch of photos and videos might be a difficult task.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed